Building Text with AI!

Welcome to AI-powered text generation! Technology is advancing, and AI is creating written content. AI is revolutionizing how we build text, from chatbots to auto-generated articles. We’ll explore AI’s potential in text creation and how it’s changing communication in the digital era. Whether you’re a business owner, writer, or just curious about the future of writing, AI text generation is worth exploring.

Getting Ready to Write

What You Need: Setting Up Your Tools

Setting up to write with AI requires specific tools and software. For example, TensorFlow is needed to create a character-based Recurrent Neural Network (RNN) for text generation. Access to the Shakespeare dataset is also essential for training the model. This can be achieved using tf.keras and eager execution to process the text, define the model, and train it.

To ensure successful access and utilization of the dataset, characters can be converted to numeric representation using the StringLookup layer. Additionally, training examples and targets can be created.

Further resources and materials, such as advanced customized training and links to TensorFlow documentation, are available to optimize the setup and tools for writing with AI. These resources provide insights into building a text generation model using the transformer architecture, emphasizing key components and offering a clear understanding of how transformers work.

Getting Your Hands on the Shakespeare Dataset

To access the Shakespeare Dataset for text generation building, you can use platforms like TensorFlow. You’ll need to train a character-based Recurrent Neural Network.

To convert characters to numeric representation, you can use the StringLookup layer. Components like Embedding, GRU, and Dense layers are crucial for predicting the next character in a sequence.

Configuring checkpoints for monitoring training progress is important, and you’ll also need to attach an optimizer and loss function to train the model effectively.

The blog provides a clear step-by-step guide on processing the text, defining the model, and training it to generate coherent Shakespearean text.

For tools, TensorFlow’s tf.keras and eager execution are essential for working with the dataset. The instructions also include demonstrations on using the trained model to generate text and the option to customize training for improved results.

Reading and Understanding the Dataset

Understanding the dataset is important for building a text generation model.

It’s essential to grasp key components like tokenization, dataset preparation, input embedding, and positional encoding. This ensures the model works effectively.

The dataset’s structure and format significantly impact its interpretation and usefulness.

For example, numeric representation of characters using StringLookup layer and creating training examples and targets are important for processing the text.

The tutorial emphasizes the significance of effectively understanding the dataset to derive meaningful insights or train a model.

Strategies such as using transformative architecture, creating a model with Embedding, GRU, and Dense layers, and practicing advanced customized training can help with this understanding.

Demonstrating how to use the trained model to generate text and improve results by adjusting parameters further highlights the significance of effectively utilizing the dataset for text generation building.

Making Text Into Numbers

Turning Shakespeare’s Words Into Numbers

One way to convert Shakespeare’s words into numbers is by using StringLookup layer. This turns characters into numeric representation.

Another approach is to create training examples and targets. The AI’s writing challenge with Shakespeare’s dataset can be set up by defining the model with Embedding, GRU, and Dense layers to predict the next character in a sequence.

The goal of training the AI with Shakespeare’s words is to demonstrate how to use the trained model to generate text. This may involve attaching an optimizer, loss function, and configuring checkpoints to monitor the training progress.

Improving results can also be achieved by adjusting parameters. These methods and goals are important when building a text generation model using the transformer architecture.

Setting Up the Writing Challenge for the AI

To set up the writing challenge for the AI, several steps are necessary. Initially, the text needs to be processed, converting characters to a numeric representation using StringLookup layer. Additionally, training examples and targets will need to be created.

Then, a model must be built using the Embedding, GRU, and Dense layers to predict the next character in a sequence. This model can then be trained by attaching an optimizer, loss function, and configuring checkpoints for monitoring the training progress.

Once the model is trained, it can be used to generate coherent text, allowing for further improvement through the adjustment of parameters.

The Shakespeare dataset, used in the writing challenge for the AI, can be obtained and used to train the model on Shakespeare’s writings. This dataset is valuable in demonstrating how to generate text with the AI model, given its extensive and well-known collection of works.

During training, examples and goals should be established to guide the AI in understanding the intricate patterns and nuances of Shakespeare’s writing. By setting clear parameters for the AI to learn from during training, it can improve its ability to generate coherent and contextually accurate text, emulating the style and language of Shakespeare.

Making Examples and Goals for Training

Training an AI can involve converting characters to a numeric representation using a StringLookup layer and creating training examples and targets. This helps the AI understand the sequential order of characters and predict the next character.

Establishing specific goals for AI training involves building a model with layers like Embedding, GRU, and Dense to predict the next character accurately. Additionally, an optimizer, loss function, and checkpoints are attached to the model to monitor the training progress.

Considerations should be made to process the text, build the model, train it, and generate coherent text. The text needs to be well-processed and the model built to understand the sequence of characters. The training must have clear goals and markers for progress to ensure the AI is learning effectively and generating coherent text.

These considerations are important for successful training of an AI in text generation.

Writing With The AI

How the Writing AI Figures Out What to Say

The writing AI analyzes the Shakespeare dataset. It converts characters to numeric representation using a StringLookup layer and creates training examples and targets.

Then, it builds a character-based Recurrent Neural Network model with Embedding, GRU, and Dense layers to predict the next character in a sequence.

The model is trained by applying an optimizer, loss function, and configuring checkpoints for monitoring the training progress. This way, the AI learns to generate coherent text by predicting and producing sequences of characters.

To improve its writing abilities, the AI can be further trained and adjusted using additional resources and documentation to refine parameters and enhance its results for generating text. As a result, the AI uses the knowledge gained from the training to develop a greater understanding of the underlying structure of the text data, therefore generating more coherent and diverse writing samples.

Giving the AI a Quick Writing Test

- A quick writing test for AI involves processing the text, building the model, training the model, and generating text.

- The processing stage converts characters to numeric representation and creates training examples and targets.

- Building the model includes implementing layers like Embedding, GRU, and Dense to predict the next character in a sequence.

- Training the model involves attaching an optimizer, loss function, and configuring checkpoints for monitoring the training progress.

- Generating text demonstrates how to use the trained model to produce coherent text and enhance results by adjusting parameters.

- Administering a quick writing test for AI involves understanding text generation models using the transformer architecture.

- This includes tokenization, dataset preparation, input embedding, and positional encoding.

- The testing process also entails providing insights into the coding process and highlighting the importance of large language models (LLMs) in various applications.

- Challenges and limitations of giving the AI a quick writing test may include the complexity and time-consuming nature of building and training the model.

- Continuous improvements in parameters are needed to obtain optimal results.

- Additional resources and documentation support may be required for further exploration and understanding of the concept.

Teaching the AI To Write Better

Teaching Steps: Optimization and Dealing with Errors

Teaching steps for text generation can be optimized. This can enhance the AI’s writing abilities and minimize errors. Techniques include using advanced language models, tokenization, and dataset preparation.

In a programming tutorial for a character-based Recurrent Neural Network for text generation, the process involves:

- Converting characters to numeric representation.

- Incorporating essential layers.

- Configuring parameters to generate coherent and accurate text

Addressing errors in the AI’s writing can be effectively achieved through:

- Analyzing loss functions

- Configuring checkpoints for monitoring the training progress

- Fine-tuning the optimizer

Continuous improvement of the AI’s writing skills can be achieved through:

- Constant fine-tuning

- Adjusting parameters

- Taking advantage of advanced techniques like large language models , input embedding, and positional encoding in the transformer architecture

These optimization and error handling strategies have the potential to significantly improve the AI’s text generation capabilities over time.

Remembering Good Writing with Checkpoints

Writers can improve their work by using checkpoints to remember their writing goals and progress. Setting specific milestones and objectives helps track accomplishments and reminds them of what good writing looks like.

Effective strategies for meeting writing goals include setting SMART objectives. This ensures writers stay focused and monitor progress effectively.

Regularly reviewing and reflecting on writing checkpoints is important for continuous improvement and growth in writing skills. By revisiting checkpoints, writers can identify areas for improvement and celebrate successes, leading to better overall writing quality.

Putting the AI Through Writing School

Text generation building involves several important steps:

- Converting characters to numeric representation.

- Creating training examples and targets.

- Using StringLookup layer to convert characters for the neural network.

- Training the AI on specific text, like Shakespeare’s writings.

- Defining the model with layers such as Embedding, GRU, and Dense.

- Predicting the next character in a sequence.

- Equipping the AI with optimizer, loss function, and checkpoints.

- Generating text using the trained model.

- Improving results by adjusting parameters.

- Exporting the trained model for future tasks.

By following these steps, the AI is prepared for writing challenges and can continue to learn and improve over time.

See the AI Write!

Letting the AI Show Off Its Writing

The AI’s writing ability can be tested by providing a small set of prompts and analyzing the responses.

Teaching steps to optimize the AI’s writing involve training it on a diverse range of texts to improve its language and style.

Addressing errors includes implementing a system that provides constructive feedback and encourages the AI to learn from its mistakes.

The trained model can be used to generate coherent and relevant text across various topics and genres to showcase its adaptability and proficiency in accommodating different writing styles and structures.

Keeping the AI Writer Ready

How to Save Your AI Shakespeare

It’s important to take steps to preserve and maintain your AI Shakespeare model. This includes regularly backing up the model and its training data to prevent any loss of progress. Using version control systems like GitHub can help safeguard against accidental loss or corruption of the model. Creating checkpoints and saving the model’s weights during training allows for easy recovery and continuation from a stable state in case of any unexpected interruptions.

To ensure accessibility and continued creative output, it’s essential to export the trained model for future use and maintain documentation on the specific settings and parameters used during training. By using these tools and methods, you can safeguard the AI Shakespeare model for ongoing use and further development.

Doing Even More With AI Writing

Extra Tips for Super AI Writing Skills

Enhancing advanced AI writing skills can be achieved through various methods. These include fine-tuning language models, using multi-head attention mechanisms for better context understanding, and integrating advanced training techniques for more nuanced text generation.

Multi-head attention in AI writing allows the model to focus on different positions and encode different aspects of the input. This provides a more comprehensive understanding of the context and enhances the quality of generated text.

Moreover, advanced techniques like transfer learning, model distillation, and reinforcement learning can be used to train AI models to write professionally. This involves fine-tuning them on specific datasets, distilling knowledge from large pre-trained models, and optimizing their performance through reward-based learning methods.

These extra tips and techniques contribute to the overall improvement of AI writing skills, ensuring more coherent, contextually relevant, and professional text generation.

The AI’s Writing Mechanics: Attention

Multi-head attention is an important part of AI’s writing. It helps the model focus on different positions, leading to a better understanding of the context. This mechanism allows the AI to consider various aspects at the same time, resulting in more coherent and contextually relevant text. The decoder layer further improves the AI’s writing by helping it generate output sequences and refine predictions, leading to smoother and more coherent text generation.

Training techniques also significantly impact the AI’s writing skills and performance. Optimizer selection, loss function configuration, and checkpoint monitoring all play a role in ensuring that the AI model is optimized for generating high-quality text. Effective training techniques help the AI refine its language generation capabilities, producing text that closely resembles human writing.

Making the AI Think With Multi-Head Attention

Multi-head attention helps the AI focus on different parts of the input. It enables weighing various aspects of the data simultaneously when processing information. This mechanism is useful in building a text generation model to analyze words and phrases more deeply, leading to improved comprehension and context awareness.

In the decoder layer, multi-head attention captures dependencies between different words and characters, aiding in generating more cohesive and coherent text. This results in more natural and meaningful output. Training the AI with multi-head attention enhances its writing skills, enabling it to understand and interpret complex textual patterns and produce more realistic, contextually relevant content.

Building the Decoder Layer

The decoder layer for text generation has essential components. These include processing the text, building the model, training the model, and generating text.

Processing the text involves converting characters to numeric representation and creating training examples and targets.

Building the model includes creating layers such as Embedding, GRU, and Dense to predict the next character in a sequence.

Training the model involves attaching an optimizer, loss function, and configuring checkpoints for monitoring the training progress.

Generating text demonstrates how to use the trained model to generate text with the option to improve results by adjusting parameters.

The decoder layer improves AI’s writing abilities by enabling the model to generate coherent text based on its training.

It predicts the next character in a sequence and adjusts parameters for more accurate results.

Best practices for training the decoder layer involve meticulous dataset preparation, careful selection of model layers, configuring the optimizer and loss function, and monitoring training progress through checkpoints. These practices contribute to developing a well-trained decoder layer and better text generation capabilities.

How to Train Your AI Like a Pro

To train your AI like a pro, start by using a StringLookup layer. This converts characters from Shakespeare’s writings into numeric representation.

Next, create training examples and targets to process the text properly. This is essential for improving the AI’s writing skills.

Build a model using TensorFlow’s Embedding, GRU, and Dense layers to predict the next character in a sequence. This helps the AI in generating coherent text and writing better.

Attach an optimizer, loss function, and configure checkpoints for monitoring the training progress. This allows the AI to learn and refine its writing mechanics.

Tweak parameters to enhance the generated text. Also, consider exporting the trained model for future use and exploring advanced customized training options.

These steps and additional tips will help develop super AI writing skills and improve the AI’s writing mechanics. This enables it to produce high-quality, human-like text.

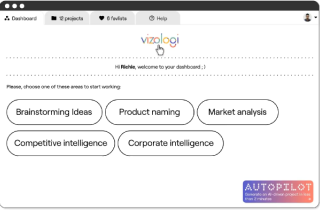

Vizologi is a revolutionary AI-generated business strategy tool that offers its users access to advanced features to create and refine start-up ideas quickly.

It generates limitless business ideas, gains insights on markets and competitors, and automates business plan creation.