Cool Ways to Make Text!

Do you want to make your text more fun and creative? Well, you’re in luck! There are lots of cool and exciting ways to make your text stand out. You can use bold and italic formatting, vibrant colors, and eye-catching fonts. There are endless possibilities to explore!

Whether you’re designing a poster, creating a social media post, or just jazzing up your notes, these tips will help you take your text to the next level. Let’s dive in and discover the coolest ways to make your text shine!

What is Text Generation?

Why Text Generation is Useful

Text generation creates stories and articles that imitate human language patterns. It’s useful for quickly generating content in industries like publishing, marketing, and journalism.

It can also develop chatbots that assist in chats, understand and respond to user queries, and provide support on websites and social media. This improves the user experience.

Text generation can help combat the spread of fake information by generating fact-based content that counters misinformation. This is important in the age of social media and online news. It helps create accurate and reliable content to counteract fake news.

Different Ways to Create Text

Creating text can be done in several ways: traditional human writing, AI-generated content, and collaborative writing platforms.

When computers learn to write, they use language models and machine learning algorithms to process large amounts of text data. This helps them understand linguistic patterns and create coherent sentences.

However, there are challenges in making text. These include biases in the training data, maintaining semantic consistency, and ensuring the quality of the generated content. These challenges emphasize the importance of high-quality training data and the ongoing development of language models capable of producing accurate and unbiased text.

How Computers Learn to Write

When Machines Follow Rules

Machines follow rules in text generation. They use language models and AI processes to produce written content. Through training, machines learn to write by analyzing text data to understand language patterns. Rules are then applied to generate coherent text.

Text tools change the world by augmenting human intelligence. They have real-world applications in fields like data science. For example, ChatGPT can suggest better blog post titles and generate code for data science projects, boosting productivity and creativity.

Text generation tools offer opportunities for innovation and efficiency. However, it’s important to consider their limitations, like occasional errors and lack of common sense in the generated content.

When Machines Learn from Lots of Data

Machines learn from lots of data. They go through a process called training. In this process, they receive a large amount of information and are programmed to recognize patterns within it. Algorithms are used to help the machine improve its performance based on the input data. The more data the machine can analyze, the better it becomes at learning.

The benefits of machines learning from lots of data include making predictions, identifying trends, and recognizing complex patterns. This can be very useful in fields like healthcare, finance, and marketing, where large volumes of data need to be analyzed to make informed decisions.

However, there are challenges associated with machines learning from lots of data. These include potential biases in the data, which can lead to inaccurate or unfair outcomes. There are also concerns about privacy and security when handling sensitive information. Ensuring that the quality of the data is high and free from errors or inconsistencies is important when training machines on large datasets.

Really Smart Writing Machines

Text generation is the AI process of creating written content that imitates human language patterns. Really smart writing machines, such as GPT-3 and BERT, are able to use text generation by using large language models and training data to understand and mimic human language.

These machines learn to write by processing vast amounts of text data from books, internet sources, and other written materials. They can create text using methods like predictive text, language modeling, and even generating code for data science projects.

Text generation is useful in various real-world applications. It can suggest titles for blog posts, create functional codebases for data science projects, and even produce human-like conversations in chatbots.

In the future, developments in this technology may include improvements in semantic consistency, reducing bias in generated texts, and the use of text generation in more advanced data science and AI applications. As these technologies continue to develop, there is also potential for text generation to aid in creative writing, content creation, and even assist in translation and language learning.

Making Text for Chats and Help

Talking to Bots

Talking to bots provides an opportunity for users to interact with artificial intelligence in a more conversational manner and receive relevant information or perform tasks. Computers learn to write and generate text using language models trained on vast amounts of data, which allow them to predict and generate human-like sentences and texts.

While machines can write in a way that imitates human language, the accuracy of computer writings varies depending on the quality of the training data andthe language model used. It’s important to note that computer generated texts may contain occasional errors and lack common sense due to limitations in understanding context and nuance. Despite this, text generation tools can still augment human intelligence and provide valuable support in various applications, such as suggesting blog post titles or generating code for data science projects.

Making Help that Talks

Text generation is a process where AI creates human-like written content. It’s helpful for customer service chatbots, content creation, and data science projects. AI learns to write using language models like GPT-3, BERT, and T5. These models use unsupervised and reinforcement learning to improve their text generation.

The challenges of text generation include ensuring semantic consistency, reducing bias, and using high-quality training data. In the future, AI in text generation will focus on improving natural language understanding, addressing common sense reasoning, and enhancing context preservation. New methods like zero-shot learning and few-shot learning are being explored for writing in multiple languages with minimal training data.

Creating Stories and Articles

Text generation happens in different ways. One way is by using language models like GPT-3, BERT, ALBERT, and T5. These models help imitate human language patterns and styles. Computers learn to write by being fed lots of text data during the training process. This helps them understand and replicate human language.

Creating text has challenges. These include making sure the meaning stays consistent and addressing any biases found in the generated texts. Text generation tools also have limitations like occasional errors and a lack of common sense.

These tools are used in various ways. For example, they can suggest better titles for blog posts and generate functional codebases for data science projects. This aims to improve content creation and data science processes.

Stopping Fake Stuff

Text generation can be done in different ways. One way is using language models like GPT-3, BERT, ALBERT, and T5. These models imitate human language patterns and styles. They’re trained on lots of text data, so they can create content that sounds real.

Using text tools is crucial. They help spot fake content by finding and filtering out generated or manipulated text. For example, language models can find inconsistencies and strange language patterns, flagging them as possibly fake.

While computers writing text can be helpful, it’s important to be careful. These tools might make mistakes or not make sense sometimes.

Even though there can be issues, text generation tools are helpful. They can suggest titles for blog posts and create code for data science projects, which boosts human intelligence in some areas.

Turning Words into Other Languages

Text generation can be accomplished in several ways: neural machine translation, rule-based machine translation, statistical machine translation, and hybrid machine translation.

Computers learn to translate and write in different languages by training on large amounts of bilingual text. This allows the systems to identify patterns, grammar rules, and vocabulary in both source and target languages.

However, making sure the translated text sounds natural and is accurate poses challenges, such as maintaining semantic consistency, avoiding bias, and the need for high-quality training data.

Despite these challenges, text generation tools have practical applications in data science projects. For example, they can suggest better titles for blog posts, generate functional codebases for data science projects, and augment human intelligence.

These tools provide valuable assistance, but occasional errors and a lack of common sense in the generated content are still evident.

Hard Parts about Making Text

Making Sure Words Sound Right

Writers can make sure their words sound right by paying attention to the rhythm and cadence of the language. They can do this by reading their writing out loud. This helps them spot any awkward or jarring phrasing.

They can also use text-to-speech software to listen to their writing. This can help identify areas where the flow of words may not sound natural. In different languages or dialects, writers can study pronunciation guides and consult with native speakers.

Thinking about the sound and flow of words in written text is important. It can greatly impact the readability and impact of the writing. A well-crafted rhythm and cadence can draw readers in and make the text more engaging and memorable. On the other hand, awkward or jarring phrasing can disrupt the reading experience and detract from the overall impact of the writing.

Therefore, carefully considering the sound and flow of words is important for creating effective written content.

Keeping Text Good and Fair

One way to ensure fair and good text is to have high-quality training data. This data should represent different perspectives and avoid biased language.

Another method is to use evaluation metrics. This helps to check the accuracy, coherence, and fairness of the generated text.

To teach computers to create fair and accurate text, ethical considerations must be part of the training process. This includes using algorithms to detect and reduce biased language.

It’s also important for the language model to respect privacy, consent, and cultural sensitivities. This plays a crucial role in maintaining fairness and accuracy.

Ethical considerations with text generation tools involve addressing the potential for spreading misinformation and promoting harmful stereotypes. It should also include respecting intellectual property rights.

Understanding the impact of generated text on society and considering the implications of using language models for commercial or personal gain are important ethical considerations.

Thinking about Right and Wrong

Machines can’t understand right and wrong when creating text. Humans need to make sure computer-generated text follows ethical standards. This means considering the risk of biased or misleading content and harmful material. Developers and users should set strict guidelines and filters to maintain ethical standards. Organizations should focus on training data quality and evaluate text generation models to reduce negative impacts.

Text Making in the Future

New Tricks for AI

Text generation using AI has various methods. Some methods include language models like GPT-3, BERT, ALBERT, and T5. These models are trained on large datasets to imitate human language patterns and styles, allowing them to generate coherent and contextually relevant text.

AI has the capability to generate text for a wide range of applications including chats, help, stories, and articles. For instance, ChatGPT can be used to suggest better titles for blog posts and even generate functional codebases for data science projects.

However, the use of AI for text generation poses challenges related to bias in generated texts, semantic consistency, and the need for high-quality training data. Additionally, ethical considerations such as the potential misuse of AI-generated content and the lack of common sense in the generated outputs should be carefully addressed.

Despite these challenges, AI-powered text generation tools have the potential to augment human intelligence and provide valuable assistance in various domains.

Mixing Different Ways to Make Text

One way to create diverse and impactful writing is by combining different methods of text generation. For example, using open AI’s GPT-3 model along with Google’s BERT model can result in more natural, coherent, and contextually relevant text.

By mixing these methods, writers can benefit from a wider range of language styles and patterns, resulting in more engaging and relatable content. However, this approach also brings challenges, such as maintaining consistency in tone and avoiding biases.

Despite these challenges, the potential benefits of mixing different ways to make text are significant, including improved creativity, enhanced efficiency, and the ability to customize text generation based on specific project needs. Additionally, the combination of methods allows for the generation of more accurate and relevant content across various industries and applications.

How Text Tools Change the World

Text tools, like GPT-3, BERT, ALBERT, and T5, have changed communication. They can create content that reads like human language. This improves content creation, translation, and summarization, making communication more effective on different platforms. These tools also help with global challenges and inclusivity by offering language translation and text-to-speech services. They even assist people with disabilities in accessing information.

In data science, tools like ChatGPT suggest titles forblog posts, create codebases, and enhance human intelligence. Despite their benefits, these tools can have occasional errors and lack common sense. Continuous improvement and evaluation are essential for their application.

Questions People Ask

Can Machines Write like Us?

Machines can now create text that looks human. They use models like GPT-3, BERT, ALBERT, and T5 to do this. But the text they make isn’t always right. It might have mistakes and not make sense. Even though they help with tasks, like writing, they aren’t perfect. People like data scientists, writers, and researchers can use these tools. They are useful for things like coming up with blog post ideas and making code for data projects.

But there are still problems, like wrong ideas in the text and needing better training info. As the models get better, there are new chances and things to study in text making.

Are Computer Writings Always Right?

Computer writings can sometimes be wrong. Text generation models have improved a lot, but they’re not perfect and can make mistakes. Machines can write like humans using AI-powered language models, but they might struggle with making sense and being consistent. These tools are used in data science, content generation, and creating code. It’s important to know that their performance can vary, and they might not always produce perfect or contextually appropriate content for all uses.

While text generation models have great potential and benefits, it’s really important to be careful and evaluate their output thoughtfully.

Do These Tools Work Everywhere?

Text generation tools may not work well in certain regions due to limitations in understanding local idioms, dialects, and cultural references. They may also struggle with accommodating different languages and dialects. Ethical and legal considerations are important when using these tools in various regions. It’s crucial to follow copyright laws, avoid spreading misinformation, and ensure cultural sensitivity.

Businesses and organizations working globally should carefully consider these factorswhen implementing text generation tools across different cultures.

Who Gets Help from Text Tools?

Text tools help many people. They benefit content creators, writers, marketers, and researchers. These tools can:

- Help create engaging and informative content

- Improve productivity and efficiency

- Provide valuable insights through data analysis

Text tools have diverse impacts on different users. They can:

- Enhance creativity and innovation

- Simplify complex tasks

- Improve overall communication

For example:

- Content creators can generate new ideas, improve writing, and engage their audience using text tools.

- Marketers can create compelling ad copies and analyze customer feedback with these tools.

- Researchers can use text tools for data analysis and summarization.

Is it Okay to Let Computers Write?

Text generation models like GPT-4, BERT, ALBERT, and T5 have shown that machines can imitate human language. However, they may not always be accurate and can lack common sense or contain errors. Despite this, using text generation tools in data science projects has benefits, such as suggesting better titles for blog posts and generating functional codebases.

The ethics of letting computers write raises concerns about bias in generated texts and the need for high-quality training data. While these tools augment human intelligence, there is a need to address the ethical implications and potential limitations of relying solely on computer-generated content.

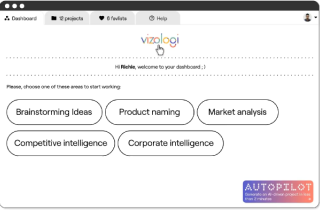

Vizologi is a revolutionary AI-generated business strategy tool that offers its users access to advanced features to create and refine start-up ideas quickly.

It generates limitless business ideas, gains insights on markets and competitors, and automates business plan creation.