Deciphering the enigma of AGI (Artificial General Intelligence).

Is it a dream? Is it science fiction? No! It’s the emergence of the superintelligence we’ve long imagined. Dive into the intriguing Artificial General Intelligence (AGI) world, where the boundaries between fiction and reality blur.

Consider generative AI to be the trailer for all that is yet to come in the next decade. We’re on that exciting preview that leaves you expectant for more. AGI will be the full movie, a drama full of surprises, unexpected twists, a plot that defies any foresight, top-notch special effects, and a cast of characters that will captivate you from start to finish. Ultimately, generative AI is just the backdrop; AGI will be the real show!

There is no doubt in your mind that the mission of the leading players in the generative scene, such as OpenAI, Microsoft, Google, Meta, Cohere, and Anthropic, is the construction of what we call General Artificial Intelligence. The horizon points here; that is the goal. GenAI is still the prelude to what is to come.

As to what form this technology (or “entity,” as some call it) will take, no one knows to date. It is pure speculation, and reaching a consensus to understand What AGI is challenging. There is a profound lack of definition; not even OpenAI could give you a 100% precise definition. We are having a hard time defining something that does not yet exist.

But what we do know is what it is not. We can state emphatically, to clear up the misunderstandings that are arising in the press and social networks, that the current state of the art in the advancement of LLMs (Large Language Models) integrated into GPTs, such as ChatGPT, does not constitute an AGI, but a form of specialized artificial intelligence. Without getting into contradictions, it is a specialized AI with a general-purpose nature, similar to how we use Internet search to solve general topics. However, the search bar remains the same; the results vary depending on the question.

Definition of AGI (Artificial General Intelligence).

Artificial General Intelligence (AGI), refers to a type of artificial intelligence distinguished by its ability to understand, learn, reason, and perform tasks that outperform human intelligence in various areas.

Unlike specific models such as ChatGPT, which are designed for natural language processing tasks, AGI will be characterized by its general approach and the ability to be applied to various tasks and domains without requiring specific programming.

While models such as ChatGPT are highly specialized and designed for specific tasks, AGI will seek to emulate the versatility and flexible adaptation of the human mind, representing an advanced goal in artificial intelligence.

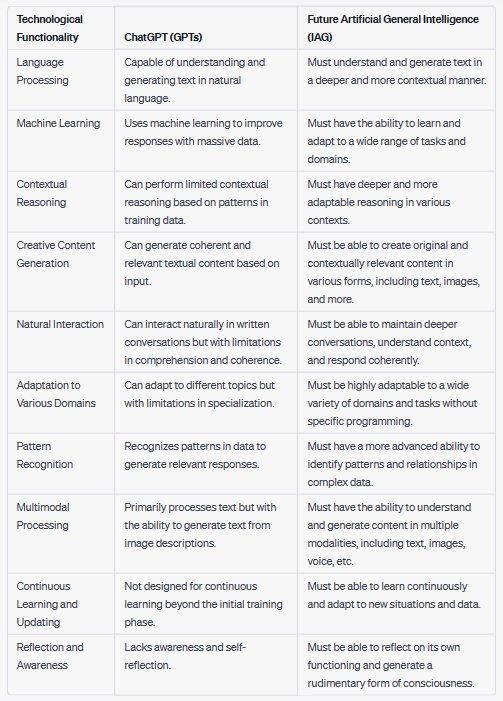

Similarities between current GPTs vs. future AGIs.

It is worth noting that the recent advances that have occurred with GPTs establish the technological basis of those coming to us. There will be differences in the future, of course, and the leap will not be trivial by any means, but it can be stated that the primary basis of future AGIs is already in production according to the state-of-the-art we have in 2023.

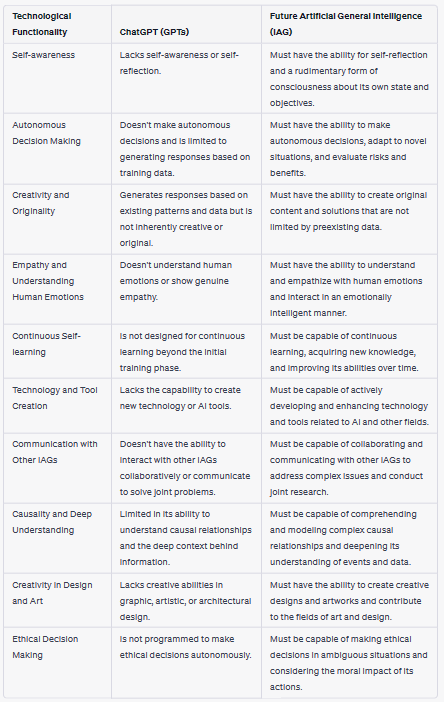

Comparative table of similarities between GPTs and AGIs

Where we are at with GPT-4 and the rumored version of GPT-5 that could arrive by the end of the year is in moving much further into the multimodal processing part, incorporating multiple types of data inputs, such as text, images, audio, video, metadata, etc., into a single AI system.

This amalgamation will allow the system to analyze, understand, and process information more holistically since it uses not only one but several different modes of communication; in any case, we must not confuse multimodality with generality.

Differences between current GPTs vs. future AGIs.

Among the differential points, the evolution of GPTs towards AGIs will go in the direction of acquiring skills that we can consider much more human, outside the domain of what a machine can do today, those attributes that differentiate us in our species from all others, these AIs could reproduce complex emotions, reflection, ethics in decision making, and the so acclaimed conscience.

Any of the points described above involve such complexity at a conceptual and technical level that it is perfectly understandable that there is general confusion about the AGI. Many of the challenges proposed today seem practically impossible or unfeasible, but after the experience with this technology, I do not dare to rule out any of the proposed scenarios.

Emotions, to some extent, can be translated into code here; several projects have been underway in this regard for years, both in robotics and image and video recognition. Ethics understood as a set of recognizable rules to be followed on a consensus of good practices, would also be programmable in some way here. The problem will be social and political, not technical.

I do not see from a technical point of view how we can apply consciousness to a machine when we still do not understand well how ours works, the human one. It would make some sense to think of a kind of synthetic consciousness that the machine could acquire with a series of predetermined objectives.

Another issue would be to consider “technical consciousness” to be that the machine is aware of its state. For example, if it is off or plugged in, there will be a hint of consciousness, but think that the fuse in your house’s electrical panel would also have consciousness under this reasoning.

Comparative table of differences between GPTs and AGIs.

Singularities, bots, and humanoids.

It is also unclear what form this technology will take. Beyond chatbots, AGI will have the potential for real-world product creation and self-improvement, surpassing human understanding and even patching its vulnerabilities.

It may acquire form in software and develop form in software embedded in hardware (robotics). On this point, it is inevitable to refer to two cult science fiction movies in this discipline, which, as the years have gone by, have nothing of fiction but a lot of science.

I don’t know who Spike Jonze talked to or how he consulted in 2013 to shoot the movie Her, maybe with a young Sam Altman, who knows, but he couldn’t have been more right. In this production, a virtual assistant named Samantha appears; more than one startup is already working on how to create this product.

For those of you who have seen the movie, Samantha represents what could be the future evolution of an AGI operating system. The following functionalities appear in the movie sequences: Natural language comprehension and communication, emotional intelligence, learning and adaptation, personalized assistance, creativity and artistic expression, remote interaction, curiosity, exploration, self-improvement and growth, complex problem-solving, and exploration of consciousness.

It could be said that with current technology, Samantha would be 30%-40% solved; in software, it would make sense to evolve in this aspect, but there will be a thousand surprises along the way. In this branch, the possibilities are endless, and we will see in the next medium-term steps in AI how a layer of emotions will be incorporated into the GPTs; previously, it required the change to voice interaction instead of interacting with written text.

In hardware plus software, I allude to the reference of the movie Ex Machina, in which a gynoid called AVA appears; in this case, we can say that we are pretty far from reaching this technological level, the humanoid that Tesla is preparing may begin to work in a few years. However, there is still a long way to go until this prototype reaches our homes as a household appliance next to the television.

AVA displays the following functionalities in the film: Natural language communication, emotional expression, learning and adaptation, manipulation and deception, problem-solving and strategy, conservation instinct, sensory perception, physical manipulation, curiosity and desire for freedom, and ethical and existential issues.

AVA today is fiction. Samantha v1.0 is an elementary version, and we can see it in a horizon of 3 to 5 years. Still, in robotics, there are significant challenges to overcome, where Artificial Intelligence’s state of the art will be the least of its problems. Below is the list of challenges to be solved, with no easy solution in the short or medium term:

- Mechanical design and dexterity: Involves the creation of robots with the ability to move and manipulate objects efficiently and accurately.

- Sensory perception: The ability of robots to gather information from the environment through sensors and process it effectively.

- Object recognition and manipulation: The ability of robots to identify objects and perform physical actions on them.

- Human-machine interaction: The improvement of communication and collaboration between humans and robots.

- Autonomy and decision-making: Robots can make decisions independently and operate autonomously in complex environments.

- Power and energy efficiency: The search for efficient and sustainable energy sources for robots.

- Ethical and safety aspects: The consideration of ethical issues in the design and use of robots and security for both robots and people.

- Cost and accessibility: Reducing the cost and accessibility of robotic technology to a broader audience.

- Regulatory and liability aspects: The creation of regulations and standards for the robotics industry and the definition of liability in case of incidents.

- Social acceptance and impact: The assessment of how society perceives robots and their impact on everyday life.

There is another possibility, the human-cloud-AGI interconnection, and this concept is called superintelligence, pointing to an intersection between the idea of AGI that we have discussed in this article, the concept of singularity proposed by Ray Kurzweil, and the vision of Elon Musk’s company with Neuralink.

If AGI approaches singularity or transhumanism, as Kurzweil proposes, brain-machine interfaces like those developed by Neuralink could seamlessly integrate AI with the human mind. This could have profound implications for how humans interact with technology and how AI is deployed and used in society. What I can assure you is that I’m not going to connect to this.

Conclusions.

It is hard to find a consensus on what AGI is, or how it will be, about when; everything points to the fact that in the next ten years, we could have something similar, or some significant advance in this sense, the degree of uncertainty or feasibility is still very high, despite the recent advances that GenAI has caused, somehow investment flows will be critical to make significant advances, especially in robotics.

It will take time until we have a humanoid in production to help you with household chores or program it to help you at work or take care of a person. There are advances in this regard, in Japan or South Korea, the countries with more robots per person and are accustomed to interacting with this technology, but what we are talking about in this article transcends far beyond the current state of robotics; we are not talking about a mechanical arm that serves you a plate of noodles, or a robot that welcomes you and talks to you at the entrance of a hotel.

Once it is viable until that product acquires a reasonable price of between $4,000 to $6,000, it will take some time, and there is another variable of cultural and social acceptance; in Japan, these interactions with machines are accepted with naturalness.

However, as with GenAI, in software, we will find AGI like water running through a spring or electricity running through wires, in the cloud, the Internet, applications, computers, vehicles, wearables, on the fridge screen, etc.

To understand the implications that the AGI will bring us, I end with a haunting phrase that Sam Altman (CEO of OpenaI) uttered in 2021:

‘AGI was going to get built exactly once.’

Sam Altman.

I hope this reflection does not leave you indifferent.

Pedro Trillo, CEO and founder at Vizologi.

Vizologi is a revolutionary AI-generated business strategy tool that offers its users access to advanced features to create and refine start-up ideas quickly.

It generates limitless business ideas, gains insights on markets and competitors, and automates business plan creation.