How AI Makes Text with Smart Algorithms

Artificial Intelligence (AI) has changed how we use technology. One exciting area where it has made a big impact is in creating text. AI uses clever algorithms to produce content that makes sense, is useful, and meets specific requirements. Chatbots and content tools are just some examples of how AI is transforming how information is shared in today’s digital world. Let’s explore how AI makes text generation smarter and more effective with its smart algorithms.

Understanding AI-Powered Text Generation

Defining Language Models

A language model is an AI system that creates written content like human language. It processes input data using algorithms. These models are used in natural language processing, content creation, customer service, and coding assistance.

The key components of defining a language model include its ability to understand sentence structure and generate coherent text using neural networks.

Models differ in their approach to text generation algorithms. For example, GPT and PaLM use neural networks to process input data and generate human-like language patterns, while BERT, RoBERTa, and GPT-2 focus on training methods and capabilities for detecting AI-generated content.

These models have real-world applications in generating articles, blog posts, and assisting in data science projects. They provide efficiency but also have limitations like occasional errors and lack of common sense.

While these tools complement human intelligence, they may still make errors and require multiple assessment methods to determine AI plagiarism. The blog also offers resources for further learning.

Overview of Text Generation Methodologies

Text generation algorithms can be grouped into different categories:

- Recurrent Neural Networks (RNNs).

- Generative Adversarial Networks (GANs).

- Transformers.

- Markov Chains.

- Deep Belief Networks (DBNs)

These algorithms have transformed text generation by allowing computers to learn patterns from large datasets and create language similar to human patterns.

Automated text creation brings efficiency by swiftly producing coherent and contextually relevant text for various applications, like natural language processing, content creation, and customer service.

However, AI text generation comes with challenges and limitations, including occasional errors and a lack of common sense. It also raises concerns about cheating and fraud. To address these concerns, AI content detection tools such as BERT, RoBERTa, GPT-2, GPT-3, and AI Text Classifier have been developed. While these tools are essential for identifying AI-generated content, they may have limitations, and additional methods may be needed to detect plagiarized or AI-written content.

Categories of Text Generation Algorithms

Recurrent Neural Networks (RNN)

A Recurrent Neural Network (RNN) is a type of neural network. It has recurrent connections, allowing it to process sequential data effectively. RNNs are great for handling sequential data like text. They can remember previous inputs and learn patterns over time. This makes them useful for generating coherent and contextually relevant text by processing words and phrases in the order they appear.

RNNs are used in text generation tasks, like natural language processing, content creation, and coding assistance.

For example, they can generate articles, blog posts, and assist in customer service. RNNs learn from large amounts of data and produce human-like language patterns, advancing text generation algorithms.

Generative Adversarial Networks (GANs)

Generative Adversarial Networks consist of two neural networks: the generator and the discriminator. The generator makes synthetic data, while the discriminator compares it to real data. They work together, with the generator trying to create more realistic data that the discriminator struggles to tell apart from real data. GANs are based on adversarial training, where the two networks engage in a competitive game, resulting in better output quality.

Compared to other text generation methods like Recurrent Neural Networks and Transformers, GANs take a different approach. While RNNs and Transformers focus on predicting the next word in a sequence, GANs are great at creating coherent and contextually relevant text by learning from a lot of data and creating human-like language patterns. In this way, GANs have a unique advantage in generating high-quality, realistic language output.

GANs have been used in real-world applications for text generation, such as content creation, customer service, and coding assistance. By understanding sentence structure and creating coherent text, GANs have been used to produce articles, blog posts, and other textual content for various purposes. These real-world uses show the versatility and effectiveness of GANs in text generation.

Transformers and Their Predecessors

Before the development of Transformers, predecessors in the field of text generation included Recurrent Neural Networks , Generative Adversarial Networks , Markov Chain, and Deep Belief Networks. These models were able to generate coherent and contextually relevant text; however, they were limited in their ability to capture long-range dependencies and handle sequential data due to issues such as vanishing gradients or slow training speeds.

As for the evolution of language models leading up to the development of Transformers, advancements like BERT, RoBERTa, and GPT-2 helped in improving the capability and performance of these models. These language models addressed limitations such as contextual understanding and better handling of sequential data, laying the groundwork for improved text generation capabilities.

Thus, models like BERT, RoBERTa, and GPT-2 paved the way for the enhanced capabilities of Transformers in text generation, setting the stage for more advanced and efficient content creation tools.

BERT: Bidirectional Encoder Representations

BERT, which stands for Bidirectional Encoder Representations, is an AI model. It’s designed to understand the context of words in search queries for improved results. It works by examining the surrounding words to comprehend the meaning of a word. This significantly enhances natural language processing tasks compared to previous models. BERT considers the full context of a word by looking at the words before and after it.

This enables better understanding of the nuances and meanings in a sentence. When used in data science projects for text generation, BERT helps create more coherent and relevant content. With its grasp of language intricacies, BERT supports data scientists in producing accurate and natural language outputs for various applications like content creation, customer service interactions, and coding assistance.

RoBERTa: A Robustly Optimized Version of BERT

RoBERTa is an improved version of BERT. It handles input data differently to boost performance. It uses larger mini-batch sizes and dynamic masking for better efficiency. In text generation, RoBERTa refines pre-training and fine-tunes training tasks for improved performance and faster training. Using RoBERTa can enhance language understanding, context awareness, and coherence of generated text.

Its robust optimization makes it adaptable to various text generation tasks, providing more reliable and accurate outcomes compared to BERT.

GPT-3 and GPT-4: Autoregressive Language Prediction

GPT-3 and GPT-4 have differences in their language prediction abilities. GPT-4, the successor to GPT-3, has a much larger parameter size, leading to better language prediction accuracy and contextual understanding. Both models contribute to advancing text generation algorithms by producing coherent and contextually relevant text that resembles human language.

These autoregressive models process input data and understand sentence structure to create realistic language patterns. In practical terms, they find use in content creation, customer service, and coding assistance. They are essential for generating articles and blog posts and providing language-based functionalities across various industries.

The potential of GPT-3 and GPT-4 in real-world applications lies in their ability to efficiently generate human-like language patterns and aid professionals in data science projects, demonstrating their practical usefulness and relevance.

Stochastic Models: Markov Chain

Markov Chain is a basic concept in understanding stochastic models, especially in text generation algorithms. It helps predict the next word in a sequence based on the probability of transitioning from one word to another. This tool is important for creating coherent and contextually relevant text.

Markov Chain captures patterns and dependencies within the input data, generating natural-sounding language patterns. Additionally, it can be combined with other deep learning models like Recurrent Neural Networks or Transformers to improve the accuracy and fluency of generated text.

When Markov Chain’s predictive abilities are combined with the learning and contextual understanding of these models, it enhances the overall quality of the generated text. This contributes to more efficient and effective text-generation applications.

Deep Learning Models: Deep Belief Networks

Deep Belief Networks are deep learning models. They are known for their ability to learn and represent data effectively. This allows them to discover the representations needed for feature detection or classification automatically.

DBNs consist of multiple layers of stochastic, latent variables. They have connections between the layers but not within the layers. This hierarchical architecture enables them to capture complex patterns in data. This makes them highly suitable for processing and generating text.

Compared to Recurrent Neural Networks and Generative Adversarial Networks, DBNs are especially good at capturing long-range dependencies in sequences. This is crucial for generating coherent and contextually relevant text.

In real-world applications, DBNs are used in text generation and content creation. This includes creating natural-sounding dialogue systems, summarizing large bodies of text, and generating responses in customer service interactions.

Their ability to learn patterns from vast amounts of data and produce human-like language patterns positions them as a vital tool for AI-generated text algorithms. This contributes to shaping the future of textual content creation.

Applications of Text Generation in the Real World

Content Creation and Marketing

AI-powered text generation has changed content creation and marketing. It helps quickly produce written material by using algorithms and language models. This enables the generation of relevant text for articles, blog posts, and marketing content.

It offers advantages in efficiency and productivity, letting businesses create more content in less time. However, automated text creation may occasionally introduce errors and lack common sense, which can affect the quality and authenticity of the content.

Choosing the right model for a text generation project involves considering the project’s requirements, the type of content needed, and the required accuracy and coherence. Options like GPT, RoBERTa, and BERT have their strengths and weaknesses. It’s important to evaluate and compare these models to choose the best one for the project. There are several ChatGPT-like alternatives available for content creators and marketers today.

In sum, AI-powered text generation enhances content creation and marketing, improving efficiency and productivity. However, it’s essential to strike a balance between the benefits and limitations of automated text creation and select the most suitable model for each project’s specific goals.

Automated Customer Support

Automated customer support can enhance the customer experience by responding promptly and effectively to questions and concerns. AI-powered text generation for customer support offers several benefits, including 24/7 availability, instant responses, and personalized interactions. These algorithms can analyze customer inquiries and generate relevant, coherent text to address their needs.

However, implementing automated customer support through text-generation algorithms has potential challenges and limitations. This includes occasional errors in understanding complex queries and a lack of human empathy in responses. Additionally, these algorithms may struggle with understanding colloquial language and sarcasm, leading to inaccurate or insensitive responses.

It’s important for businesses to continuously monitor and improve these systems to ensure effective and empathetic customer interactions.

Personal Assistants and Chatbots

Personal assistants, like Siri and Alexa, perform tasks like setting reminders, answering questions, and controlling smart home devices. On the other hand, chatbots are mainly used for customer service, providing automated responses, and facilitating conversations on websites and social media.

Both can be integrated into different industries. For example, in healthcare, personal assistants can help schedule appointments and provide medication reminders, while chatbots can be used for patient support. In e-commerce, chatbots can assist with product recommendations, and personal assistants can streamline the ordering process.

Using personal assistants and chatbots for text generation raises ethical concerns, including the spread of false information, manipulation of public opinion, and potential impact on employment in fields such as journalism. There’s also a risk of biased data leading to discriminatory language. Strict guidelines and ethical standards are crucial for the development and use of these technologies.

Advantages of Automating Text Creation

Efficiency in Producing Large Volumes of Text

AI-powered text generation has improved the efficiency of producing text. It automates the content creation process and can handle vast amounts of data to generate relevant text. This reduces the time and effort needed for manual writing. The advantages of using AI for text generation include faster production, consistent language patterns, and the ability to handle large projects without sacrificing quality.

When selecting the right model for a text generation project, consider criteria such as language processing capabilities, training methods, and track record for generating coherent text. Also, consider the specific needs of the project, like the type of content and the target audience, to choose the most suitable algorithm for efficient text production.

Customization and Personalization Opportunities

AI-powered text generation is a powerful tool. It can be used in various industries and applications.

For example, businesses can tailor their marketing content to individual customers, offering a more personalized user experience. AI-powered text generation can also be used in data science projects to create customized outputs, such as reports and product descriptions. Companies can efficiently produce large volumes of personalized content, improving productivity. In customer service, AI-generated text can provide personalized responses to customer inquiries, thereby enhancing the overall service experience. This customization can also be used in academic settings to provide more individualized feedback to students.

Augmenting Data Science and Analysis

Automating text creation in data science and analysis has many advantages. AI-generated text algorithms make data analysis tasks more efficient, resulting in improved insights.

For example, language models like GPT and PaLM utilize neural networks to comprehend sentence structure and produce coherent text. This can help generate articles and blog posts for data analysis purposes.

However, there are challenges and limitations to consider. AI text generation may occasionally produce errors and lack common sense, which can impact the accuracy of the analysis. Concerns about AI-generated content have led to the development of AI content detection tools to combat plagiarism and fraud in academic settings.

Integrating different text generation models with data science projects can enhance analysis and insights. Models like Recurrent Neural Networks, Generative Adversarial Networks, and Markov Chains can process large amounts of data and generate human-like language patterns, contributing to a more comprehensive analysis. Despite the high accuracy of AI tools, it’s important to use multiple assessment methods for data analysis.

Challenges and Limitations of AI in Text Generation

The Intricacies of Human Language Understanding

AI-powered text generation involves encoding semantics, grammar, and context into algorithms. This enables machines to produce coherent and contextually relevant content. Advanced language models, such as GPT and PaLM, utilize neural networks to comprehend sentence structures and generate text that resembles human language.

Ethical concerns and potential misuse pose challenges in AI text generation, particularly in academic settings, where AI-generated content may lead to academic dishonesty. AI detection models, such as BERT and RoBERTa, have been developed to identify AI-generated content and deter academic fraud.

When selecting a model for text generation, considerations should include accuracy, coherence, and contextual relevance, as demonstrated by models like GPT-3 and deep belief networks. Performance benchmarks must evaluate the model’s ability to process and generate text that is accurate and contextually appropriate, ensuring the quality of the output.

These intricacies and considerations are important in developing and applying AI-generated text algorithms.

Controlling for Quality and Relevance

Text generation algorithms can be controlled to ensure quality, thereby ensuring relevance and accuracy in the generated content. This is done through effective training and stringent validation processes. By using high-quality training data and fine-tuning language model parameters, developers can enhance the algorithms’ ability to produce coherent and contextually relevant text.

Additionally, implementing human review and feedback loops can help identify and correct any inaccuracies or inconsistencies in the generated content.

AI-powered text generation models should undergo rigorous testing and validation procedures to meet quality standards. This includes benchmarking against human-authored texts and industry-specific standards.

Ethical considerations in controlling for quality and relevance in AI-powered text generation involve ensuring that the generated content does not disseminate misinformation, perpetuate bias, or contain harmful language.

Developers must prioritize transparency and accountability in disclosing the use of AI-generated content and clearly attribute human-authored work.

Ethical Considerations and Misuse Potential

Ethical considerations about AI text generation are critical. Misusing this technology to create and spread false information can be a big problem. People might use AI to make fake news, copy academic work, or create deceptive ads without good oversight. To prevent this, developers should establish robust processes for verifying and confirming AI-generated text. They should also make it clear when AI makes text.

It’s also important to continually improve tools for detecting AI-generated content and preventing cheating across various industries. In schools, utilizing AI models like BERT and RoBERTa can aid in identifying and preventing AI-generated text. However, we should also acknowledge the limitations of these tools and explore alternative methods for managing AI-generated text.

Selecting the Right Model for Your Text Generation Project

Criteria for Model Evaluation

One important way to evaluate a text generation model is by examining how well it produces language that is coherent and contextually relevant. This involves checking the accuracy and smoothness of the generated text, as well as whether it maintains the same tone and style as the input data. It’s also crucial for the model to reduce mistakes and produce language that sounds human-like.

Comparing the model’s performance with existing benchmarks and similar models is a key part of evaluation. This helps understand how well the model can write in a way that makes sense, flows well, and stays relevant.

It’s also important to consider how the model can be used in data science projects. This involves assessing how well the model performs with various types of input data and how it enhances the efficiency and quality of text generation in the context of data science projects.

Performance Benchmarks and Comparisons

Performance benchmarks are important for comparing different text generation models. They establish standardized metrics for evaluating factors like coherence, contextuality, and error rates. This enables a comprehensive evaluation of model performance.

When evaluating text generation models for a specific project, criteria such as accuracy, speed, scalability, and suitability for the intended application are crucial. These ensure that the selected model aligns with the project’s requirements and objectives.

Integration with Data Science Projects

Text generation algorithms play a significant role in data science projects. For instance, Recurrent Neural Networks analyze and generate sequential data, making them useful for time-series analysis and natural language processing tasks. Similarly, Generative Adversarial Networks can generate synthetic data for training machine learning models. Transformers excel at processing large amounts of text data, suitable for text analysis and summarization in data projects.

When choosing a text generation model for integration with data science projects, consider its ability to understand sentence structure, generate coherent text, and its suitability for the specific project requirements. Automated text creation and language generation enhance tasks such as content creation, customer service interactions, and coding assistance. These algorithms can help generate articles and blog posts, providing valuable resources for tech professionals.

However, it’s essential to acknowledge that while these tools complement human intelligence, they may still have limitations and occasional errors that should be carefully accounted for in data science projects.

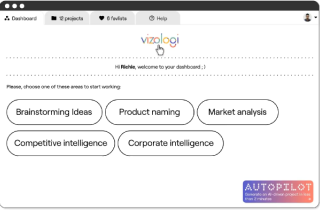

Vizologi is a revolutionary AI-generated business strategy tool that offers its users access to advanced features to create and refine start-up ideas quickly.

It generates limitless business ideas, gains insights on markets and competitors, and automates business plan creation.