How ChatGPT AI Algorithm Powers Bots

Chatbots understand and respond to your queries effortlessly, thanks to the power of ChatGPT AI algorithm. This technology enables bots to engage in natural, human-like conversations with users, offering a seamless and personalized experience. ChatGPT leverages the latest breakthroughs in artificial intelligence to process language, comprehend context, and generate coherent responses in real-time.

Let’s explore how this cutting-edge algorithm is revolutionizing the way we interact with bots in our everyday lives.

Understanding ChatGPT: The AI Behind Smart Bots

What is ChatGPT Exactly?

ChatGPT is an AI tool that can answer questions, write text, and have natural conversations. It uses advanced models like GPT-3.5 Turbo and GPT-4, trained on a lot of internet data, to help with various tasks and remember conversations.

ChatGPT improves its language skills by learning from Large Language Models, which understand relationships between words. It uses self-attention and transformer architecture to get better as it processes more data. It also uses techniques like supervised fine-tuning, reinforcement learning from human feedback, and the reward model to improve how well it understands and responds to people.

ChatGPT and DALL-E: Cool Tools for Creating Stuff

Two AI tools, ChatGPT and DALL-E, have impressive capabilities for creating content. They can write original copy, answer questions, and engage in conversations using natural language prompts. ChatGPT uses advanced language models like GPT-3.5 Turbo and GPT-4 to make conversations feel real by remembering and integrating previous discussions. They use supervised and unsupervised learning methods for training, enhancing their skills.

These AI tools have practical applications in content creationfor industries such as digital marketing, customer support, and creative writing. They can also create original art, product ideas, and visuals for different applications.

How ChatGPT Makes Conversations Feel Real

Learning Styles: How ChatGPT Gets Smarter

ChatGPT learns in various ways to get smarter. It uses technology like GPT-3.5 Turbo and GPT-4 that are trained on a lot of internet data. These models help ChatGPT understand and respond to many different prompts and questions. They adapt to different learning styles effectively.

ChatGPT uses supervised and unsupervised learning methods for training. In supervised learning, it fine-tunes using reinforcement learning from human feedback. This guides the learning process to align with human preferences. Unsupervised learning allows ChatGPT to gather insights through self-learning, improving its adaptability and language understanding over time.

Natural language processing is crucial for enhancing ChatGPT’s language skills and understanding. It helps the chatbot remember conversations, engage in dialogue, and integrate with different workflows. This makes ChatGPT a useful tool for various communication styles and learning preferences.

Guide to ChatGPT Learning: Supervised vs. Unsupervised

Supervised learning in ChatGPT involves giving the AI labeled data. This helps the system understand patterns and make accurate predictions.

Unsupervised learning allows ChatGPT to learn from unlabeled data. This helps it recognize hidden patterns and generate insights on its own.

ChatGPT uses supervised learning to fine-tune its conversational abilities. It’s trained on specific data and gets human feedback to improve its responses.

The unsupervised aspect helps the AI explore unstructured data and generate relevant context without explicit guidance.

Both supervised and unsupervised learning have their own pros and cons.

- Supervised learning helps ChatGPT make more accurate predictions and achieve higher precision. But, it needs a lot of labeled data and may be less adaptable to new information.

- On the other hand, unsupervised learning allows for flexibility and adaptability to new data. But, it may be more prone to errors and lower precision due to the lack of labeled guidance.

What’s a Transformer? How ChatGPT Uses It

ChatGPT uses Transformers in its AI technology to process language. These models are widely used for natural language tasks. Specifically, ChatGPT uses the transformer architecture, known for handling sequential data and understanding complex word relationships. This allows ChatGPT to consider the entire conversation context for more coherent responses.

By leveraging Transformers, ChatGPT can generate text, hold conversations, and complete language-based tasks. This results in more human-like interactions, showing the crucial role of the Transformer architecture in ChatGPT’s capabilities. Recent advancements in transformer-based models like GPT-3 and GPT-4, trained on vast internet data, enable ChatGPT to provide more relevant and coherent responses.

Playing with Building Blocks: Tokens in ChatGPT

Tokens in ChatGPT are the basic elements used in the language model. They help the AI process natural language by handling words, sentences, and phrases. This is essential for ChatGPT’s conversational abilities.

The tokens become part of a vast dataset that the Large Language Model (LLM) draws upon. This allows the algorithm to understand and structure language patterns. Manipulating tokens through techniques like masked language modeling and next-token prediction enhances ChatGPT’s language processing capabilities. This allows the model to generate coherent and relevant responses.

ChatGPT Gets Schooled: Learning from Humans

ChatGPT learns from humans and improves its conversational abilities through reinforcement learning from human feedback (RLHF). This technique fine-tunes the model by guiding its learning process with human feedback. It aligns the model with human preferences.

ChatGPT also uses supervised fine-tuning (SFT) and the reward model (RM) to address potential errors and misalignment issues. Large language models like GPT-3 can produce outputs inconsistent with human expectations due to core training techniques. These challenges are effectively addressed through RLHF, helping ChatGPT understand and align with human language nuances and conversational expectations.

Chatting Up a Storm: Where ChatGPT’s Language Skills Come From

ChatGPT’s Brain: The Power of Natural Language Processing

Natural language processing is an important part of ChatGPT’s brain. It helps ChatGPT understand and create human-like text. ChatGPT uses Large Language Models (LLMs) to process lots of text data and find connections between words. This helps ChatGPT talk like a human and make sense.

ChatGPT also gets better at language by learning from human feedback. This helps it improve its responses to be more in line with what people like. ChatGPT also uses different learning methods to improve its language skills.

With these advanced methods and architecture, ChatGPT keeps getting better at talking like a human. Natural language processing is what makes ChatGPT able to have genuine and relevant conversations. This makes ChatGPT a really advanced and flexible AI tool.

Transforming Words into Actions: The Magic of ChatGPT

Teaching ChatGPT: From Start to Smart

ChatGPT can learn to have better conversations. It does this by getting feedback from humans and using that to improve. The model gets better at giving outputs that match what people expect. This feedback happens ongoing. It uses both supervised fine-tuning and rewards to guide its learning. It’s important to test ChatGPT in the real world. This means checking how well it responds in different areas and keeps things clear and relevant.

There are challenges in making sure it acts natural, avoidsbias, and works well with different systems. Also, because there’s a lot of competition in AI, ChatGPT needs to keep evolving to stay good.

Fine-Tuning ChatGPT: Teaching It Right from Wrong

Fine-tuning ChatGPT requires thinking about what’s right and wrong. We use human feedback to teach ChatGPT how to respond in the best way. This helps ChatGPT learn to make ethical choices. The reward model also encourages good behavior. However, we need to be careful of challenges, like not meeting human values. To solve this, we use supervised fine-tuning and the reward model. This helps ChatGPT match what humans expect.

Using these techniques is important to make sure ChatGPT behaves well in different situations.

ChatGPT’s Personal Trainer: The Reward Model

The reward model in ChatGPT’s Personal Trainer uses reinforcement learning from human feedback. This helps improve the model’s alignment during training.

By leveraging human feedback, the reward model fine-tunes ChatGPT, ensuring it aligns with human preferences and values. This approach makes ChatGPT produce outputs consistent with human expectations.

In real-world applications, the reward model notably improves ChatGPT’s performance by addressing potential misalignment issues in large language models like GPT-3. This makes the AI chatbot more reliable and user-friendly in various workflows.

Using supervised fine-tuning and the reward model , ChatGPT is better equipped to deliver responses that align with human intent and meaning. This significantly enhances user experience and trust in ChatGPT’s capabilities for natural language processing tasks.

Upgrading ChatGPT: The Advanced Training Session

The advanced training session for ChatGPT involves using GPT language models, specifically GPT-3.5 Turbo and GPT-4. These models are trained on extensive internet data and use generative pre-training and transformer architecture.

The session covers supervised and unsupervised learning methods for training AI models. It focuses on the transformer architecture and self-attention process. It also includes reinforcement learning from human feedback to mitigate misalignment issues during the model’s training. It uses supervised fine-tuning and the reward model to ensure the chatbot aligns with human preferences in its outputs.

The upgrading of ChatGPT’s language skills and capabilities through advanced training focuses on various tasks like answering questions, writing copy, and engaging in conversations based on natural language prompts. This training also enables ChatGPT to remember conversations and integrate with other apps for broader usage in different workflows.

The blog explains how ChatGPT’s extensive training and reinforcement learning techniques enhance its language skills for real-world scenarios.

To ensure ChatGPT remains aligned and performs well, measures such as reinforcement learning from human feedback are used to guide the learning process and align the model with human preferences. The blog also describes how large language models like GPT-3 are trained and fine-tuned using SFT and RM to address misalignment issues, ensuring the chatbot’s optimal performance.

How to Play Nicely: Making Sure ChatGPT’s Aligned

Double-Checking: How to Make Sure ChatGPT Knows its Stuff

To make sure ChatGPT gives accurate and reliable responses, users can take several steps. They can double-check ChatGPT’s knowledge and understanding. Users should also be mindful of common mistakes or misunderstandings that can affect its performance. Addressing these issues can improve ChatGPT’s performance, allowing it to provide more accurate and reliable responses.

What Can Go Wrong: When ChatGPT Gets Mixed Up

Common issues when ChatGPT gets mixed up:

- Generating inaccurate or inappropriate responses.

- Model trained on internet data can clash with human expectations and values.

To monitor accuracy and appropriateness of ChatGPT:

- Techniques like supervised fine-tuning and reward modeling can be used.

- These guide the learning process to align with human preferences.

Steps to prevent ChatGPT from getting mixed up:

- Use reinforcement learning from human feedback for fine-tuning.

- Human input guides the process to reduce misalignment issues.

By combining these approaches, ChatGPT can provide more accurate, contextually relevant responses and reduce the chances of getting mixed up.

Putting ChatGPT to the Test: Real-World Performance

ChatGPT performs differently in real-world conversations compared to controlled training environments. Challenges like maintaining context over long conversations, handling ambiguous language, and addressing unexpected cases arise. To enhance its real-world performance, ChatGPT can benefit from diverse training datasets covering various topics, reinforcement learning using human feedback, and refining its self-attention mechanism for better context understanding.

Continuous updates, integratingadvanced language models, and algorithm fine-tuning will further improve ChatGPT’s real-world performance.

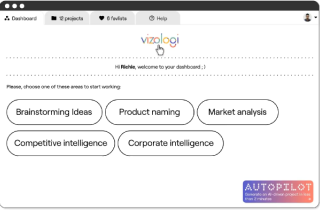

Vizologi is a revolutionary AI-generated business strategy tool that offers its users access to advanced features to create and refine start-up ideas quickly.

It generates limitless business ideas, gains insights on markets and competitors, and automates business plan creation.