Latest in AI: Language Model Advancements

Artificial Intelligence (AI) language models are making big strides, changing how machines understand and produce human language. They’re transforming customer service and language translation tools, reshaping how we communicate with technology. As researchers push the limits of what AI can do with language, we’re entering a new era in AI-driven communication technologies.

What’s a Language Model?

A language model is a type of artificial intelligence model. It is designed to understand and generate human language. It works by analyzing patterns and structures within text data. This helps it predict the next word in a sentence or generate coherent, contextually relevant text.

For example, the GPT-3 model can produce human-like responses in conversations. BERT excels in understanding the context of words in a sentence, enabling more accurate language understanding.

Early language models have laid the groundwork for the development of more sophisticated models. They address fundamental challenges in language processing, such as text generation, comprehension, and speech recognition. These foundational models have contributed to the advancement of newer, more complex models like transformer-based language models, which are integral in creating influential models like ChatGPT and BERT.

The early models have provided a stepping stone for the development of advanced language models. These models greatly improve the capabilities of AI in language processing tasks.

Making Sense of Words: How Models Understand Us

Language models help us understand human language. They process text and speech data to comprehend context, meaning, and nuances, generating coherent responses.

Neural networks play a big part in AI’s language understanding. They analyze vast amounts of linguistic data to detect patterns and relationships, advancing natural language processing.

AI’s use in language understanding raises ethical concerns about data privacy, bias, and misuse. It’s important to address these and ensure responsible development and use, with ethics at the forefront. This is crucial for shaping the future of AI language models and their impact on society.

The Brains Behind AI Words: Neural Networks

Early language models such as the transformer model have significantly contributed to the development of neural networks. Models like BERT and GPT-3 have made substantial advancements in language understanding and generation, albeit through different approaches. BERT focuses on transformer-based architecture for bidirectional understanding of natural language, while GPT-3 employs a large autoregressive transformer for text generation.

Ethical considerations in the development and deployment of AI language models are of utmost importance, as they have the potential to perpetuate biases, spread misinformation, and impact privacy. These considerations range from ensuring fairness and accountability in model outputs to safeguarding user data and preventing unintended negative consequences.

The rapid advancement in large language models underscores the ongoing debate among industry leaders about the future of generative AI, necessitating a balance between innovation and responsible deployment.

Teaching Big AI Brains to Get Smarter

AI can keep getting smarter on its own. It can create its own training data and fact-check itself to improve.

Using large language models (LLMs) and neural networks in language processing tasks has been effective in teaching AI to understand human language better.

But, it’s important to consider ethics and safety when making AI smarter. This includes preventing unintended negative consequences and dealing with issues like running out of training data or producing false information.

How AI Gets Its Facts Right

Large language models help AI get its facts right. They use a lot of text data to understand and generate human-like language. These models are really good at text generation, comprehension, and speech recognition. This allows AI to process and produce accurate information.

Neural networks play a big role in teaching AI to understand and process information accurately. They fine-tune LLMs for precision in natural language processing (NLP) tasks. They help AI understand semantic meaning, provide contextual information, and classify text effectively.

Ethical concerns in the development and use of language models in AI focus on ensuring the safety and control of AI systems to prevent unintended negative consequences. Also, addressing potential inaccuracies and falsehoods produced by LLMs is important to gain broader market adoption and build trust in AI technology.

Experts in AI: Who Knows More?

Language models in AI have key components like natural language processing, text generation, comprehension, and speech recognition. These components improve language models and help machines understand human language better.

Experts in AI and language understanding have made notable contributions to the development of large language models. They have explored the potential of LLMs in tasks such as text completion, question answering, and semantic understanding. Advancements in tasks like text classification, contextual information processing, and precision tuning have been driven by these experts.

Models like BERT and GPT-3 have revolutionized natural language processing tasks in AI technology. They have exceptional capabilities in text comprehension, semantic understanding, and text generation, pushing the boundaries of AI language models. Their impact has paved the way for further innovations in NLP, machine learning, and deep learning.

Talking Computers Now and in the Future

Language models like BERT and GPT-3 are changing the way people interact with computers. They can understand context, create human-like responses, and provide accurate information, making the user experience better. In the future, these advancements could lead to computers that have complex conversations, understand specific user needs, and offer personalized assistance, blurring the line between human and machine interaction.

When designing talking computers powered by AI, ethical concerns need careful attention. Privacy, bias, and the possibility of spreading false information are important for responsible development and use of these systems. Safeguards must protect user privacy and prevent the spread of false or harmful information. Ensuring transparency, fairness, and accountability in the design and use of these technologies is essential to build user trust and confidence.

Neural networks and other AI components are crucial for the advancement of talking computers. They enable language comprehension, speech recognition, and text generation. These components enhance the development of sophisticated language models, improving their ability to understand and respond to human language accurately and fluently. Applying neural networks and AI technology allows for ongoing improvements in language processing tasks, making communication with talking computers more natural and effective.

The Big Question: Is AI Talking Ethical?

Ethical considerations arise regarding AI’s ability to communicate, especially with large language models.

As these models advance, concerns about the potential spread of false or harmful information come to light. Additionally, the ability of LLMs to generate their own training data raises questions about the quality and accuracy of the information produced.

To ensure the ethical use of AI language models, it is essential to establish robust fact-checking mechanisms within the models themselves. This allows for self-correction and verification of generated content.

Developers and organizations should implement clear guidelines and oversight to mitigate the spread of misinformation and harmful content. This includes creating frameworks that prioritize user safety and promoting transparency in the development and deployment of AI language models.

Wordsmiths Like BERT and GPT-3

BERT: The Breakthrough

BERT is a new type of language model. It’s different from older models like Eliza because it can understand and process language in both directions. This gives it a better understanding of words and phrases. BERT has improved language models, making them more accurate and useful in text generation, question answering, and text classification.

However, the use of BERT and similar models raises ethical concerns. There’s a risk of spreading false information, creating biased models, and affecting privacy and security. It’s crucial to address these ethical issues to make sure these language models are used responsibly and for the greater good.

GPT-3: The Talk of the Town

GPT-3 is a widely-discussed language model. It’s known for its impressive ability to understand and create human-like text, setting a new standard in natural language processing. With 175 billion parameters, it can produce coherent and contextually relevant responses, outperforming earlier models like Eliza and BERT.

Despite its capabilities, the use of GPT-3 and similar models raises ethical concerns. There’s a risk of these models generating misleading or harmful content, requiring careful monitoring and guidance for responsible use. Moreover, the reliance on such powerful language models brings up worries about data privacy, biased language generation, and potential misuse.

These ethical considerations emphasize the importance of responsible development and deployment of advanced language models like GPT-3.

The Oldies But Goodies: Early Language Models

Oldie Eliza: The Beginnings

Oldie Eliza played a big part in advancing AI and natural language processing. It introduced early language model capabilities, engaging in simple conversations and simulating a Rogerian psychotherapist. By using pattern recognition and scripted responses, Oldie Eliza paved the way for more advanced language models. Its focus on mimicking human conversation and early natural language processing techniques set it apart as one of the first interactive language models.

Oldie Eliza’s influence laidthe groundwork for future advancements in AI language models, shaping the future of generative AI.

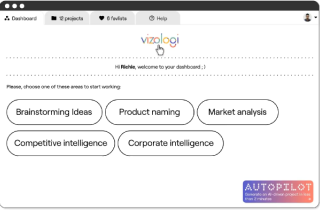

Vizologi is a revolutionary AI-generated business strategy tool that offers its users access to advanced features to create and refine start-up ideas quickly.

It generates limitless business ideas, gains insights on markets and competitors, and automates business plan creation.