I opened Pandora’s box and found Picasso.

I just created my first artwork, and I am very proud of it, I took 1 minute to conceptualize the text of the idea: “Two Picassian Lovers Walking On The Beach” and 1 second to generate the artistic image.

When I finished my first work, I got carried away by the frenzy, and I thought of a possible name for the second one, I love the style of the painter Basquiat, and I love giraffes, then I came up with this entry for the algorithm:

“A giraffe with eagle wings flying over a pyramid in the style of Basquiat.”

Et voila, the masterpiece appeared:

To make matters worse, I came up with the idea of pitting Basquiat against Picasso, in an extraordinary challenge, they had to create the world’s greatest work of art, and I threw the command below to the AI algorithm:

“Basquiat and Picasso fight to create the world’s greatest work of art.”

And they got to work (never better said):

If Picasso or Basquiat were alive, they would join the party we are having with Generative Artificial Intelligence. At the end of 2020, there was an explosion of generative text models, making an unprecedented leap with GPT-3; this summer of 2022, it’s the turn of the image, reaching a level of innovation that will be remembered in the history books.

DALL-E, Midjourney, Imagen (from Google) and Disco Diffusion, promise us an enjoyable vacation.

Signals.

The Internet is based on what we telcos call the four essential signals:

Text

Image

Audio

Video

Of the four, text (language) is the most important, building the narrative base of the others. Text is used to label an image, compose the lyrics of a song, or to create the script of a video, and it is the underlying basis of any creation that a human wants to make.

In the not too distant future, we will work generating very short texts (prompts), which will generate the writing of another more developed text, the creation of an image or design, the basis of a melody, or the composition of a video clip when you interact with this type of generative AI tools.

You must begin to familiarize yourself with the term prompt (it is of these words that we better leave it in English); that is, your work will be reduced to the imagination of prompts, and you will ask yourselves What is a prompt? This is:

“Two Picassian Lovers Walking On The Beach.”

Using that input text, I obtained an image of the output; if I had wanted to generate an article or a poem with that title, I could have also done it using Writesonic.

The mechanism is always the same:

- You think of a prompt INPUT.

- You click the GENERATE button.

- You receive a text, a document, an image, audio, or video at the OUTPUT.

To generate code, for example, you don’t need to know programming languages; you write what you want to do in natural language, and tools like Copilot generate all the code in the programming language of your choice. You tell it, cause me a 3×3 matrix and assign a weight per cell from 0 to 9, and Copilot returns the code in less than a second.

To simplify even more in Vizologi, we ask you to enter two keywords, not even a complete prompt. From them, we generate a list of startup ideas associated with the input keywords; for example, with [CATS] and [SOCIAL MEDIA] as input, it generates at the output a Tinder-like application for cats (well, instead for cat owners).

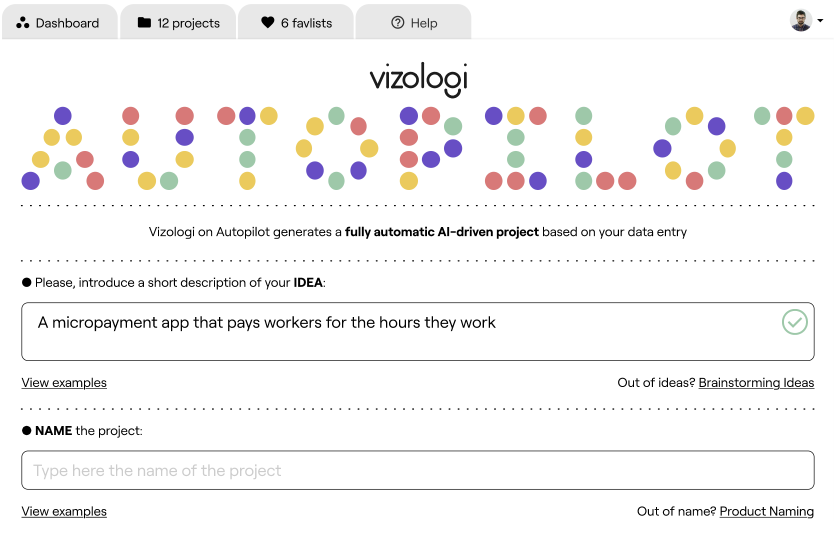

And we do not stop here; in the last quarter of this year, we will release Autopilot, asking you for five input fields, the idea (prompt), the name, the market, continent, and country.

And at the end, we generate a complete project briefing of 14 slides, including all kinds of market details, competition, business model canvas, SWOT, PESTLE, etc., all with natural language autogenerated by AI.

What’s more, next year, we will generate the logo, the brand, and the image of the idea you are developing in our software. This has just started.

State-of-the-art.

To recap, the most considerable advances to date are occurring in the text; GPT -3 (AI engine we use in Vizologi) to date has positioned itself as the most potent and sophisticated neural network, with a neural network of 175 Billion parameters; there is much speculation about Megatron Turin, which a priori will offer a neural network of 530 Billion parameters.

It could be said that the technology is far ahead of the fundamental business that we are generating the first generative AI startups. To get an idea, on this GPT-3 demo page https://gpt3demo.com/ you will find more than 300 experiments. Of these 40, we have a natural product with a generative base; of the 40, only 20 are monetizing the technology, and we have a good customer base.

The big challenge is no longer in the technology but in how to monetize Artificial Intelligence through new products and services. It will take tons of entrepreneurs to get these technologies to the street level to reach the general mass.

For all of us, entrepreneurs and early adopters, this is new. We are starting to make sense of it; neither Microsoft nor Google, with billions and billions invested, have started monetizing. Most of the investment is going into the hardware, but mainly into the training of the neural networks.

GPT-3 is what it is, as of today, because Microsoft bought OpenAI and injected 1 billion at a stroke for data training, apart from offering all its hardware. Although the algorithm behind this technology has hardly changed in 60 years, the explosion we are having now is a conjunction of high volumes of data since the Internet appeared, remarkable advances in the power of GPUs, and a titanic investment in the training of networks.

To give you an idea, the current neural networks for image generation are reaching 20 billion parameters. Today’s results are already spectacular, even if they are nine times behind the text.

There is nothing more didactic than showing an image to explain a concept so that we understand the level of exponentiality and the speed of change in which we are moving native AI startups; between the image on the right below and the one on the left, there is nine months difference, think about what we can do in 2030? Only eight years from now.

There are also significant advances in audio, but here the databases are more restrictive due to copyright issues; Jukebox is already generating music themes. For example, if you want to create a rock theme, you only need to order the prompt: Rock in the style of Elvis Presley. You load the lyrics previously generated by GPT-3, and you will get the theme music.

When you log in to your Spotify, you will most likely have to give a brief input of your musical style and mood. It will automatically generate a playlist of auto-generated and personalized tracks in real-time.

About the video, we are still in its infancy, understanding that the video is still a sequence of 24 images per second, sooner or later, when the hardware allows us to generate video with AI. Below I leave an appetizer of a music video clip where the lyrics and audio were given as input, and from this, the video was autogenerated:

Common mistakes when experimenting with Artificial Intelligence.

Talking to my clients daily, when they open an incident or ask me a question, there is a common mistake among all of them, they ask me ad nauseam, “Where is the link? … There is no link; this is AI, do not confuse it with the Internet, AI is not the Internet, it is not launching a question on the Google search engine, and receiving millions of links to websites, AI does not work with hypertext links, AI composes combined text predicting the next word after an entry, AI points to vectors in an ocean of 175 Billion parameters.

Another widespread mistake is typing in front of an AI search bar as we do with the Google bar; we get there fast and type something misspelled and misspelled: blahbalha juash ahahys smss.

Google will always respond with something to this input; with AI, forget it; it doesn’t work like that; the worse the quality of the query, the worse the result of the AI will be, to understand, when you face a generative AI product, think that you have to write the best tweet in the world, express yourself with total correctness and sophistication, AI does not have one quality or another, it depends on you as a user, the more intelligent your input is, the more intelligent the output of the algorithm will be.

The future will belong to the tweeters.

Preparing the documentation of this article, I found a video on Youtube in which a challenge between a graphic designer Vs DALL-E is proposed; it consists of making three designs of 3 images on three creative prompts randomly launched; I recommend watching it until the end, you will find the man very distressed, he will face an AI and his colleagues will make a vote with a final verdict without knowing which source has generated each image.

In the voting of the three images, 2 won the human and 1 won DALL-E; in the video, nothing is mentioned about the time used; the designer takes approximately a working day of 8 hours. DALL-E generated the three images in less than 3 seconds.

In the human process, about 600 human micro-tasks are performed using Photoshop software; with DALL-E it is three clicks of the GENERATE IMAGE button.

98% of the global economy, in some way, is based on the hourly rate of that designer. An example could be an architect, an industrial engineer, a programmer, a copywriter, a project manager, an artist, a film dubber, etc. All professions connected or dependent in some way or another to the information society are based on the four essential signals as fundamental pillars, text, image, audio, and video; the moment these pillars are generative, everything changes.

Our economy lies in the productivity we can extract to perform those 600 micro-tasks that a worker needs to perform job X, who has previously had to train for years to acquire an excellent technical level of Photoshop.

With generative AI, the work process is simplified into 3 simple steps:

1/ Generate an INPUT – Where the skill needed will be creativity.

2/ Launch the automatic generative process.

3/ Obtain an OUTPUT – The necessary skill will be the criteria for validation, modification, and readaptation of the technology’s work.

Our workflows are going to change radically, and I don’t know if we will have hours of work or people left over; let’s remember that we are at the beginning of the beginnings of generative AI, interviews that have already been made to musicians or designers, they don’t see a clear substitution, but rather a readaptation and a profound change in the way they work.

The difference between the Internet and AI is that we use the Internet to help us perform our tasks as humans; AI, however, does not help you; it does the work directly, and here is a big difference; this change is not trivial.

Concepts and job positions such as Prompt Engineers or Professional Curators are already starting to emerge. There is no doubt that when I say that the future will belong to the tweeters, I am referring to those who have an innate ability to concentrate on very complex and abstract concepts in 140 characters; they will be the most employable people in the future we are already building.

The road to singularity.

Where do we stand towards the singularity? Although perhaps we still have hope, according to the expert Geoff Hinton, today, the largest neural network that exists is based on 175 Billion parameters (GPT-3); the human brain is composed of a neural network of 100 Trillion synapses (parameters), then it could be said that we are still approximately 1000 times smarter than the best AI in the world to date.

To conclude the article, I have shown you that we are already in an accelerated and exponential loop. Moreover, the plans and developments that are being known for GPT-4 point to reach the singularity reaching that network of 100 trillion parameters, for this project is going for long, do not expect GPT-4 for 2023, nor will it be in 2029 or 2030, which according to all futurists was the date on which the singularity would occur, no doubt, that in any case, we are well ahead of the date, we are going very fast.

Vizologi is a revolutionary AI-generated business strategy tool that offers its users access to advanced features to create and refine start-up ideas quickly.

It generates limitless business ideas, gains insights on markets and competitors, and automates business plan creation.